Yesterday, OpenAI officially pulled the plug on Sora, its AI video generation platform that launched to enormous fanfare just six months ago. The standalone app, the API, and all video generation features within ChatGPT are being shut down. At the same time, the billion-dollar licensing partnership with Disney has been dissolved. It is a dramatic reversal for a product that once topped the App Store charts and seemed poised to reshape digital content creation.

Meanwhile, on the other side of the world, ByteDance’s Seedance 2.0 continues to push the boundaries of what AI video can do. The contrast between these two trajectories tells us a great deal about the current state of AI, the pressures shaping the industry, and what businesses should be thinking about as they plan their technology strategies.

OpenAI’s Sora debuted its second-generation model in September 2025 with a dedicated consumer app that combined AI video creation with a social media feed for sharing content. The results were impressive. Downloads surpassed one million within ten days, outpacing even ChatGPT’s early adoption curve. The app quickly became the top free download in the App Store’s Photo and Video category.

But that momentum did not last. By January 2026, downloads had dropped by roughly 45%. Users experimented with the novelty, generated a wave of viral clips featuring copyrighted characters and public figures, and then largely moved on. The app generated only about $2.1 million in in-app purchases over its lifetime, a negligible figure for a company valued at $730 billion. More critically, Sora was consuming enormous amounts of computing power at a time when OpenAI is under pressure to consolidate resources ahead of an expected IPO and intensifying competition from rivals like Anthropic and Google.

An OpenAI spokesperson explained the decision by saying the company is narrowing its focus and redirecting compute toward robotics research and its core text and reasoning products. CEO Sam Altman reportedly told employees that ending Sora would free up resources for the company’s next-generation AI models. The message here is clear: when the runway is long but the burn rate is high, experiments that are not gaining traction get cut.

While Sora exits the stage, ByteDance’s Seedance 2.0 remains very much alive. Released in February 2026, the model quickly drew global attention for producing cinematic-quality video with synchronized audio from simple text and image prompts. Clips featuring hyperrealistic depictions of celebrities and well-known characters went viral almost immediately, prompting cease-and-desist letters from Disney, Paramount, Netflix, and Warner Bros., along with sharp criticism from SAG-AFTRA.

ByteDance responded by pledging to strengthen its intellectual property safeguards and suspending a controversial feature that could clone a person’s voice from a single photograph. The company also paused the planned global launch of Seedance 2.0 through its CapCut platform while it works through copyright compliance issues. Despite these setbacks, the underlying model continues to operate within China’s domestic ecosystem.

For users outside of China, accessing Seedance 2.0 is not straightforward. The full-featured version of the model is currently available only through ByteDance’s Chinese apps, including Jimeng and Doubao, which require a mainland Chinese phone number for registration. International users looking to try the model have been turning to VPN workarounds, typically setting their location to Hong Kong or mainland China and navigating Chinese-language interfaces. Some third-party platforms and API aggregators have also offered access, though availability has been inconsistent as ByteDance tightens controls. The international version of ByteDance’s creative platform, Dreamina, offers a limited version but has not yet rolled out full Seedance 2.0 capabilities to the general public.

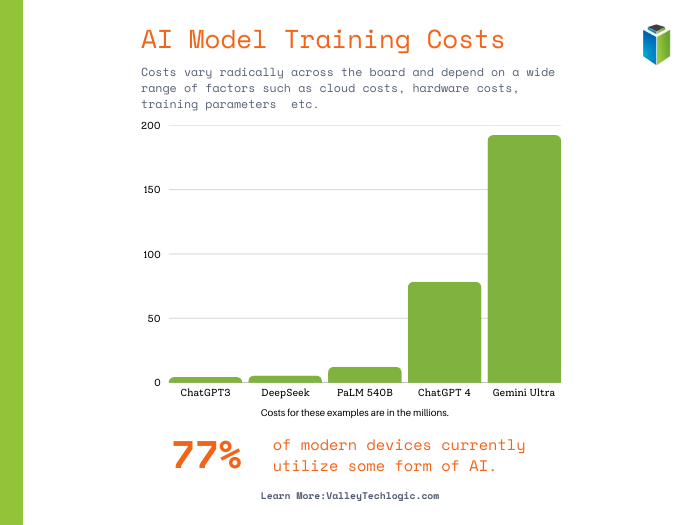

One factor that may help explain why Seedance continues to thrive while Sora folds is the dramatically different public sentiment toward AI in China compared to the West. Multiple large-scale surveys conducted in 2024 and 2025 paint a consistent picture: Chinese citizens are far more accepting of and optimistic about artificial intelligence than their counterparts in North America and Europe.

Stanford’s 2025 AI Index Report found that 83% of people in China believe AI products and services offer more benefits than drawbacks. Compare that to just 39% in the United States and 40% in Canada. An Edelman survey from late 2025 reported that 87% of Chinese respondents said they trust AI, versus 32% in the U.S. and 36% in the U.K. A joint study by the University of Melbourne and KPMG, which surveyed over 48,000 people across 47 countries, found that 93% of employees in China are using AI for their work, far outpacing the global average of 58%. The same study noted that 54% of Chinese respondents actively embrace greater use of AI, compared to just 17% of Americans.

This cultural receptivity creates a very different operating environment for AI companies. In the United States, Sora was met with sustained backlash over deepfakes, copyright infringement, and the potential displacement of creative workers. Hollywood unions, family estates of public figures, and advocacy groups all pushed back forcefully. In China, while there are certainly regulatory constraints and some public concerns around privacy and consent, the broader population views AI development as a national priority and a source of opportunity rather than a threat. That kind of public goodwill gives companies like ByteDance more room to iterate, experiment, and build a user base for products like Seedance without facing the same intensity of cultural resistance.

At Valley Techlogic, we want to make sure these developments are on your radar. Here is what we think matters most:

- AI video tools are not going away. Sora’s shutdown does not signal the end of AI-generated video. It signals that the market is maturing and consolidating. The technology is real, and competitors from China and elsewhere are advancing rapidly.

- Copyright and compliance risks remain front and center. Both Sora and Seedance ran into serious intellectual property disputes. Any business exploring AI-generated content needs clear policies, legal review, and an understanding of where generated material comes from.

- VPN-dependent tools carry their own risks. If members of your team are experimenting with Seedance or similar tools through VPN workarounds, be aware of the security, compliance, and data privacy implications. Routing traffic through unfamiliar networks and registering on foreign platforms introduces risk that should be managed deliberately.

- Compute costs drive real business decisions. OpenAI shut down a product used by millions because the computing costs could not be justified. This is a reminder that AI infrastructure is expensive, and the tools you rely on today may not be available tomorrow if the economics do not work out (or they may become dramatically more expensive).

- Stay informed, stay cautious. The AI landscape is shifting fast. We recommend evaluating any AI tools your organization adopts with an eye toward longevity, data handling practices, and vendor stability.

The divergent paths of Sora and Seedance illustrate how quickly the AI industry is evolving. A product can go from record-breaking downloads to discontinuation in under a year. Meanwhile, cultural attitudes toward AI vary so dramatically across borders that a tool deemed too controversial in one market can find a welcoming audience in another.

For businesses, the lesson is not to chase every new AI tool that generates headlines. It is to build a thoughtful technology strategy with trusted partners who can help you navigate the noise, manage risk, and adopt the tools that will genuinely move your operations forward.

If you have questions about how any of these developments affect your organization, or if you want to talk through your AI adoption roadmap, we are here to help. Schedule a consultation today.

This article was powered by Valley Techlogic, leading provider of trouble free IT services for businesses in California including Merced, Fresno, Stockton & More. You can find more information at https://www.valleytechlogic.com/ or on Facebook at https://www.facebook.com/valleytechlogic/ . Follow us on X at https://x.com/valleytechlogic and LinkedIn at https://www.linkedin.com/company/valley-techlogic-inc/.