ChatGPT is a power AI chatbot that allows the user to communicate a question to it and receive a very thorough answer on any topic the user can dream up. Created by OpenAI and already fielding massive investment offers even from companies like Microsoft, they’ve had a ton of buzz in the news both positive and negative.

It first came under scrutiny when it became apparent the tool was great for generating lots of content quickly, including articles that students could use and submit (though the quality of these articles can vary greatly).

This is because tools like ChatGPT scrub great swaths of the internet for their content. Whether it’s being asked to write a paper on the Civil War or generate a Picasso-esque picture, it takes the prompt and quickly compiles the database of knowledge it has built up from data readily available online and provides the user with what they’ve asked for.

There has been a lot of discussion around the future of AI and the ramifications of copyright, particularly when it comes to original written works or art, but today we’d like to focus on ChatGPT’s scripting capabilities and the potential pros and cons.

As leaders in the IT space we were already aware of the buzz around ChatGPT’s scripting capabilities, with some programmers praising it’s ability to create simple scripts and the potential it had to make aspects of their jobs easier. While others lamented what it meant for the programming role as a whole or whether the code output was really “up to snuff” especially when used in real world applications.

It’s become clear there’s a niche for ChatGPT in creating low level tools, but this unfortunately also includes malware and encryption scripts – which often aren’t very complicated and easily deployed via phishing type scams.

As reported by Axios, there is already evidence that hackers are using ChatGPT in the creation of malware or in improving their existing attempts to create new malware scripts. There is also evidence that it’s being used by less technically inclined people to create malware they otherwise would not be able to make.

OpenAI has made statements that they are looking to improve their product and prevent it from being abused, in the interim we would advise users to be especially cautious when clicking on links or downloading files. We wrote an article on how to spot phishing clues online that might be worth a review.

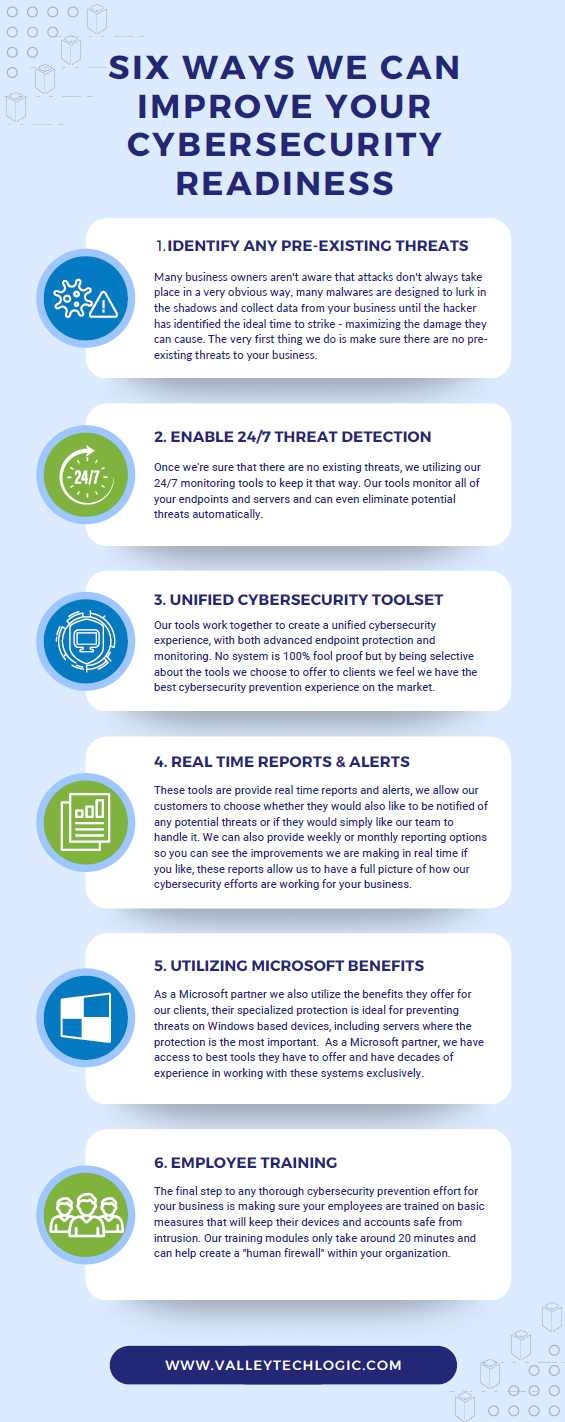

For businesses who have made getting serious about cybersecurity a primary goal in 2023, here are 6 ways Valley Techlogic can help.

Looking to learn more? Schedule a quick consultation with us today or take advantage of our 2-hour free service offer to experience our commitment to quality service for yourself.

Looking for more to read? We suggest these other articles from our site.

-

10 Holiday Shopping Tips for Safer Online Shopping

-

Thinking about buying new tech for your business in 2023? Here are our top 10 tips

-

High Tech Holidays – Five Ways Technology Can Make Your Holiday Season Easier

-

Hackers and the holidays, US government warns ransomware doesn’t take days off

-

Black Friday Isn’t just for consumer purchases, business owners can take advantage as well with these tech deals

This article was powered by Valley Techlogic, an IT service provider in Atwater, CA. You can find more information at https://www.valleytechlogic.com/ or on Facebook at https://www.facebook.com/valleytechlogic/ . Follow us on Twitter at https://x.com/valleytechlogic.

You must be logged in to post a comment.